Abstract

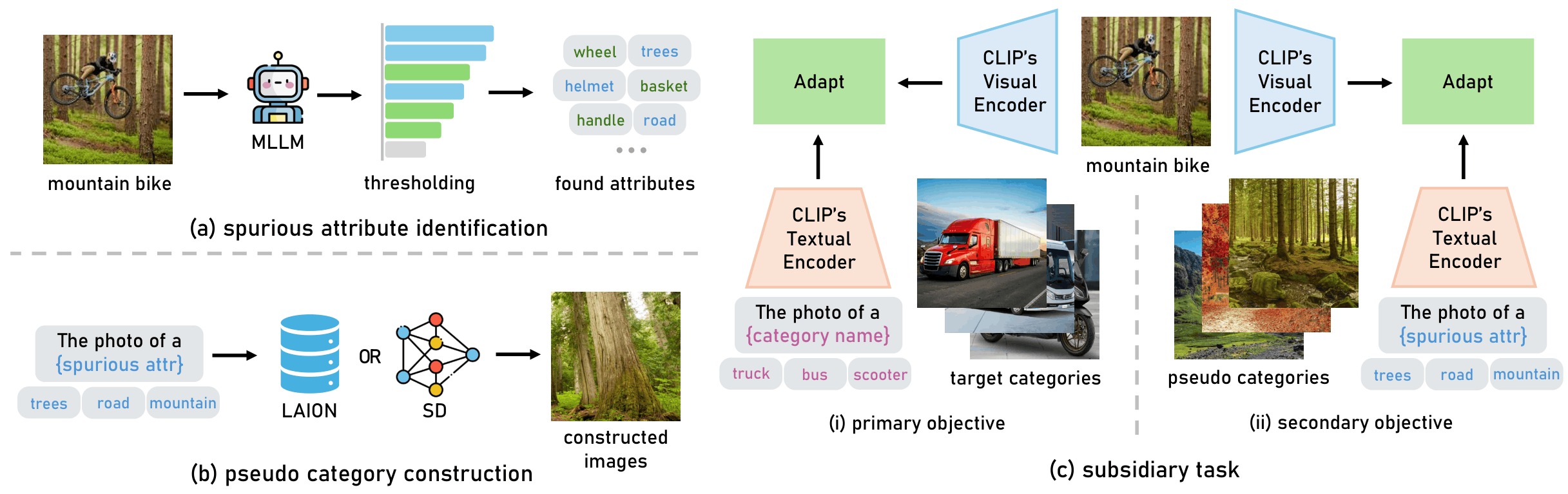

Vision-Language Models (VLMs) such as CLIP suffer from spurious correlations — shortcut features that are statistically correlated with class labels in training data but absent from or misleading in out-of-distribution images. We introduce SAS (Spurious Attribute Shielding), a plug-and-play subsidiary training task that explicitly shields VLMs from spurious attributes. SAS first uses a two-stage Spurious Attribute Probing (SAP) pipeline — combining MLLM querying and Concept Bottleneck Model probing — to automatically identify per-class spurious attributes. It then constructs pseudo categories from these spurious attributes and trains the model with a local contrastive loss (L_pse) that forces the model to discriminate the real class from its spurious pseudo-category counterparts. A selective optimization trick further focuses training on the classes with the highest spurious correlation, improving efficiency and effectiveness.

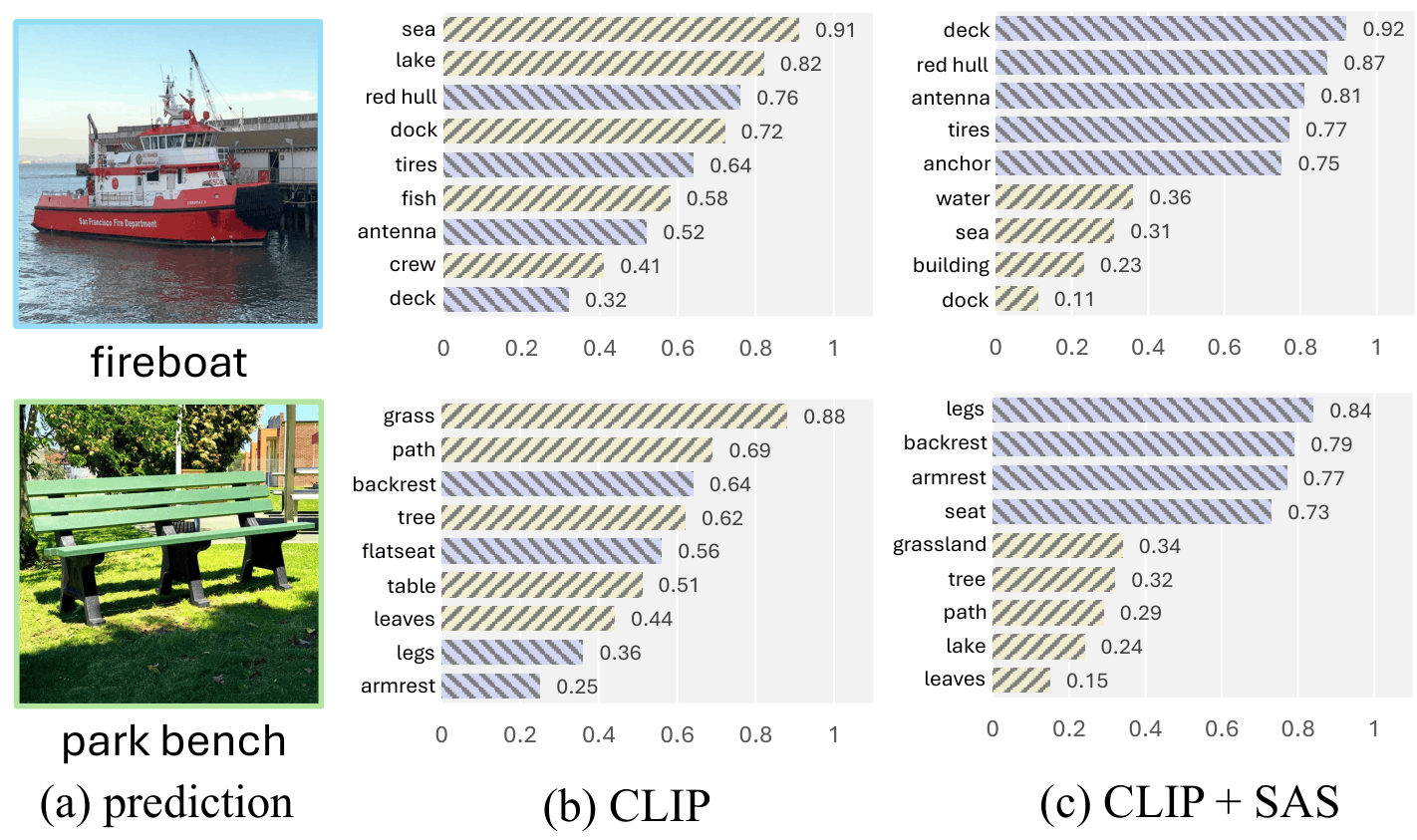

Motivation: Spurious Attributes as Black Sheep

In few-shot fine-tuning, VLMs latch onto spuriously correlated image attributes — background textures, co-occurring objects, lighting conditions — that appear reliably with a class in training data but do not represent the class itself. For example, a model trained to recognize seagulls may rely on the presence of the ocean rather than the bird's visual features, failing catastrophically when seagulls appear in other contexts. These "black sheep" attributes degrade both novel-class generalization and out-of-distribution robustness.

Method: Spurious Attribute Shielding (SAS)

Stage 1 — SAP (Spurious Attribute Probing):

- Q1: Ask an MLLM "List all visual cues you see in the photo" on context images → candidate non-core attributes.

- Q2: Ask the MLLM to filter candidates that are intrinsic properties of the class → confirmed non-core (spurious candidate) attributes.

- Q3: Ask the MLLM "Describe {class} in detail" → core visual attributes.

- CBM Probing: Train a linear Concept Bottleneck Model on CLIP image–attribute similarities. Attribute j is spurious for class c if its weight W_cj ≥ γ_c (= minimum weight among core attributes).

Stage 2 — SAS Training:

- For each class c, construct a local set:

local_set_c = {class c} ∪ {pseudo categories from spurious attrs of c}. - Optimize the subsidiary loss L_pse — cross-entropy within each local set — alongside the primary classification loss:

L = L_ce + λ · L_pse. - Pseudo images are synthesized via Stable Diffusion or retrieved from LAION-5B to represent each spurious attribute.

- A selective optimization trick applies L_pse only to the top-10% of classes by Spurious Correlation Ratio (SCR), keeping overhead minimal.

Results

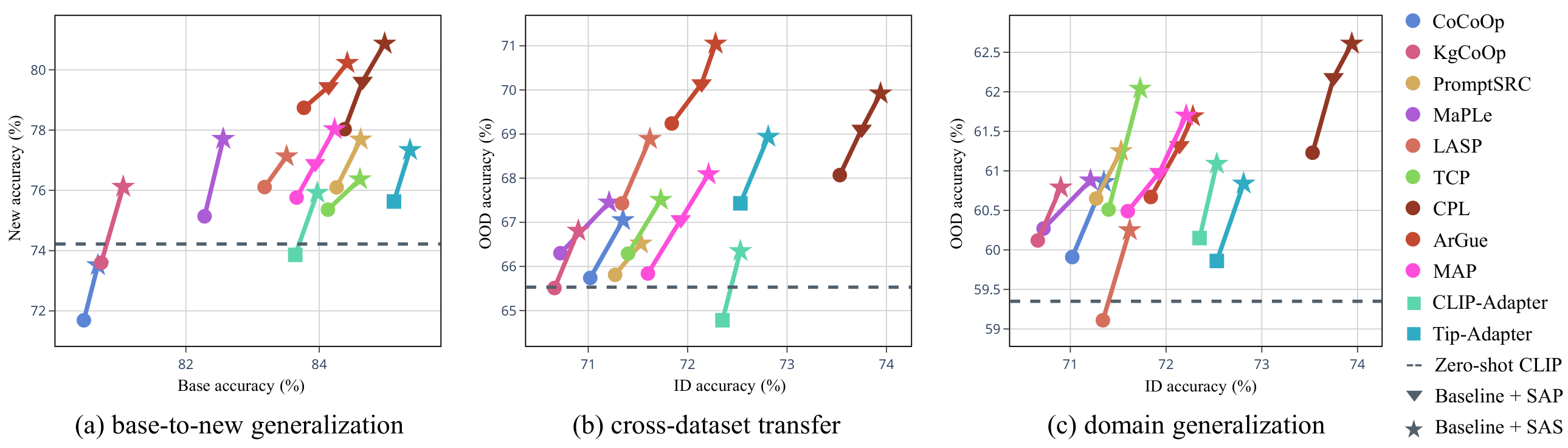

Base-to-New Generalization

- SAP and SAS consistently improve new class accuracy across all 11 datasets without compromising base accuracy.

- On strong baselines such as CPL, integrating SAP + SAS achieves a new state-of-the-art harmonic mean.

- Over 50% of images in most benchmarks contain spurious attributes, explaining the large gains.

Cross-Dataset Transfer & Domain Generalization

- SAS consistently improves out-of-distribution accuracy across cross-dataset transfer and all four ImageNet variants (V2, Sketch, A, R).

- Gains are complementary to the baseline — applying SAS to any PEFT method yields consistent upward movement on the accuracy frontier.

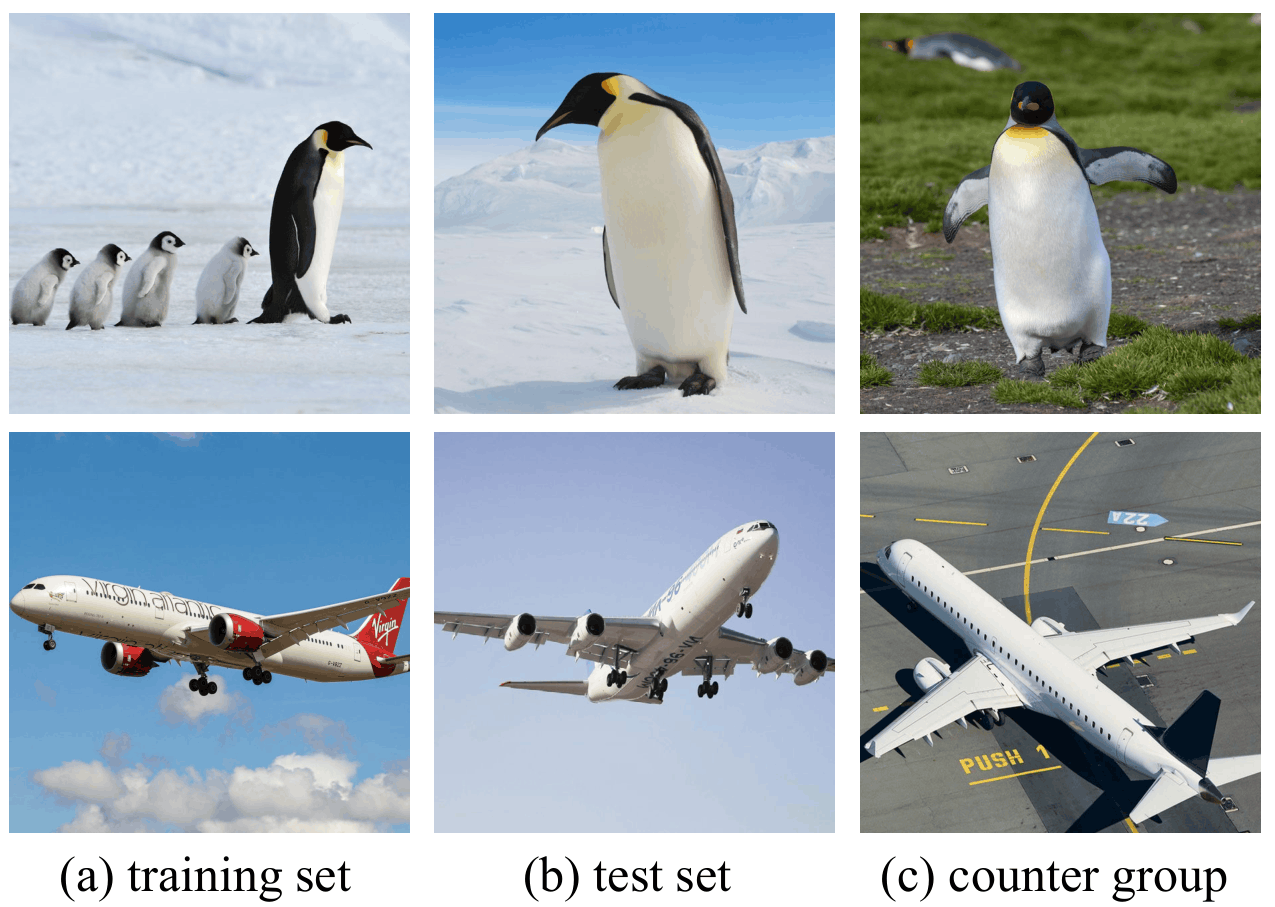

Counter Group Evaluation

- We construct a counter group — a test subset with spurious attributes removed — to directly measure reliance on spurious cues.

- SAS bridges the accuracy gap between the standard test set and counter group by up to ~6%, confirming it suppresses shortcut learning rather than simply adding data.

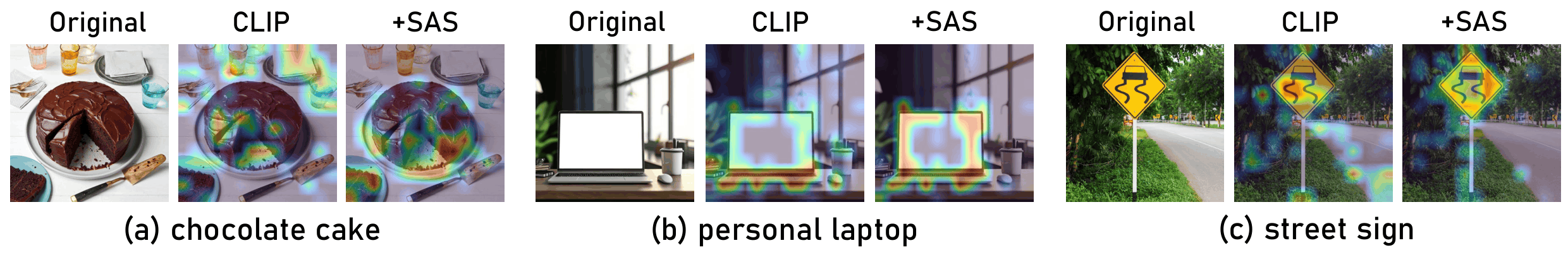

Saliency Visualization

- Without SAS, VLMs activate large spurious background regions (e.g. road for street sign, plate for chocolate cake).

- With SAS, attention shifts onto the main object, matching the expected class-discriminative regions.

BibTeX

@article{tian2025black,

title={Black sheep in the herd: Playing with spuriously correlated attributes for vision-language recognition},

author={Tian, Xinyu and Zou, Shu and Yang, Zhaoyuan and He, Mengqi and Zhang, Jing},

journal={arXiv preprint arXiv:2502.15809},

year={2025}

}